Looking into how various operations perform as the size of the sheet increase provided some interesting data. The objective is to see whether the time to execute particular operations is directly proportional to the size of the sheet.

First of all, the test

The test

A scheduled task ran every minute reading and writing, inserting and deleting data to a sheet, and gradually increasing the number of rows. It started at 1000 rows (20 columns), and each task removed 100 and added 250 rows – a net 150 row increase for each iteration. Since there is some kind of caching involved behind the scenes, the data was randomized to ensure that it actually got rewritten, and the test was repeated with and without a flush operation between each data manipulation.

The results

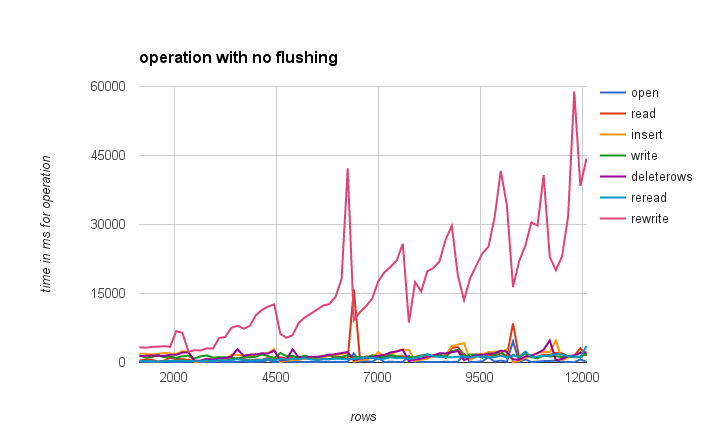

Here’s the first result, with no flushing.

Description

| operation | what it does |

| open | opens the workbook and sheet |

| read | reads all the data in one go |

| insert | inserts 250 rows |

| write | writes data to 250 rows |

| deleterows | deletes 100 rows |

| reread | reads all the data again |

| rewrite | writes all the data |

Findings

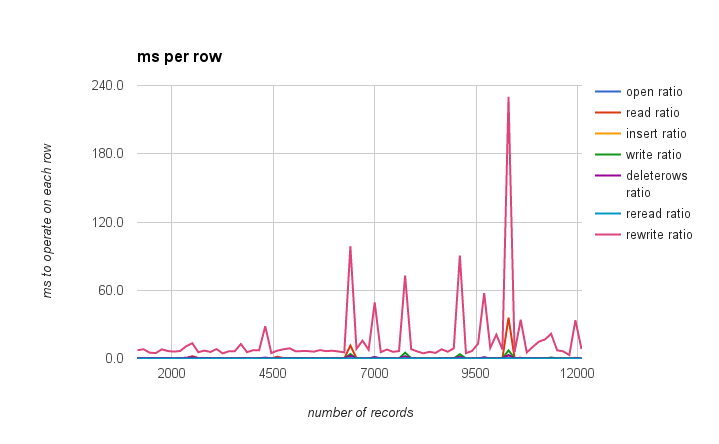

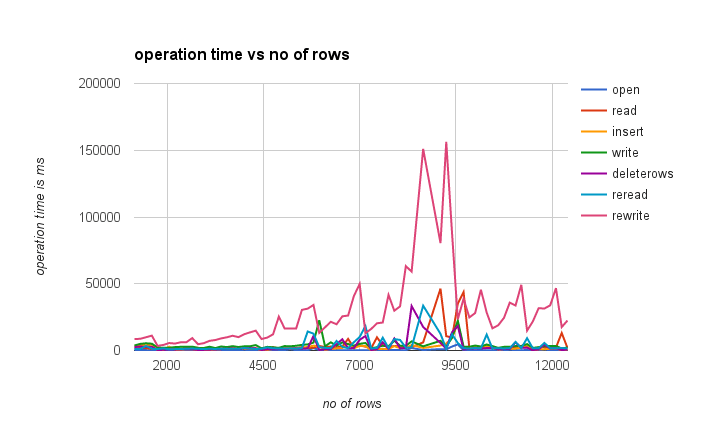

The rewrite operation is by far the most expensive, and is not proportional to the number of records. The other operations are pretty good. There is a strange pattern of a spike followed by a gradual increase till the next spike. Further analysis – this time normalizing by dividing the time by the number of rows gives an operation time per row. If this was strictly linear, it would be a straight line. This highlights these spikes every 1000 rows, not just for the rewrite operation (although it is most pronounced), but for the others too.

Clearly there is some kind of housekeeping done every now and again – watch this space for more analysis.

The Code

Here are the tests, triggered every minute.

/**

* generate a sheet full of random data

*/

function generateData() {

// get some random data

var randomData = cUseful.getRandomSheetStrings(1000, 20, 2, 20);

// get the data sheeet

var sheet = SpreadsheetApp.getActiveSpreadsheet().getSheetByName("data");

// clear it

sheet.clear();

if (sheet.getLastRow() > 1) {

sheet.deleteRows(2, sheet.getLastRow() -1);

}

// write it

sheet.getRange(1,1,randomData.length, randomData[0].length).setValues(randomData);

}

function doThingsFlush() {

doThings(true,'logflush');

}

function doThingsNoFlush() {

doThings(false,'lognoflush');

}

/**

* do some things on the sheet

*/

function doThings(flush,name) {

// how many rows to add / delete each time

var addRows = 250, delRows = 100;

var now = new Date().getTime();

var stats = {rows:0};

// get the data sheeet

var sheet = SpreadsheetApp.getActiveSpreadsheet().getSheetByName("data");

stats.open = new Date().getTime() - now;

var now = new Date().getTime();

// read the data

var values = sheet.getDataRange().getValues();

stats.read = new Date().getTime() - now;

var now = new Date().getTime();

// insert some rows

var range = sheet.getRange (Math.floor(values.length/2)+1, 1, addRows ,values[0].length);

sheet.insertRowsAfter(range.getRow()-1, range.getNumRows());

if(flush)SpreadsheetApp.flush();

stats.insert = new Date().getTime() - now;

var now = new Date().getTime();

// add some data

var data = cUseful.getRandomSheetStrings(range.getNumRows(), range.getNumColumns(), 2, 20);

range.setValues(data);

if(flush)SpreadsheetApp.flush();

stats.write = new Date().getTime() - now;

var now = new Date().getTime();

// delete some rows

var range = sheet.getRange (Math.floor(values.length/3)+1, 1, delRows ,values[0].length);

sheet.deleteRows(range.getRow(), range.getNumRows());

if(flush)SpreadsheetApp.flush();

stats.deleterows = new Date().getTime() - now;

var now = new Date().getTime();

// reread the data

var data = sheet.getDataRange().getValues();

stats.reread = new Date().getTime() - now;

// generate different data to avoid any caching effects

var data = cUseful.getRandomSheetStrings(data.length, data[0].length, 2, 20)

var now = new Date().getTime();

// rewrite the data

var range = sheet.getRange (1,1,data.length, data[0].length);

range.setValues(data);

if(flush)SpreadsheetApp.flush();

stats.rewrite = new Date().getTime() - now;

stats.rows = data.length;

stats.columns = data[0].length;

// the results.

var sheet = SpreadsheetApp.getActiveSpreadsheet().getSheetByName(name);

sheet.getRange (1,1,1,Object.keys(stats).length).setValues([Object.keys(stats)]);

sheet.getRange (sheet.getLastRow()+1,1,1,Object.keys(stats).length)

.setValues([Object.keys(stats).map(function(k){ return stats[k]})]);

}

If you want to try these for yourself, you’ll need the cUseful library.

For more like this, see Performance. Why not join our forum, follow the blog or follow me on Twitter to ensure you get updates when they are available.