Sharing secrets between the local development environment and the target platform can often be complex. Kubernetes secrets are a really simple solution once you are running in a cluster but hard to get hold of in a local development environment, and GCP secrets are hard to get at once you are in Kubernetes but handy just to pull to the local development environment. Doppler is a flexible and neat solution for injecting secrets into local builds. Let’s look at these 3 secret managers working together.

GCP secrets

I’ve written about the Secret Manager API a few times – for example SuperFetch Plugin: Cloud Manager Secrets and Apps Script. Its role in this article to provide secrets required for build and configuration scripting.

Kubernetes secrets

If you are running in a Kubernetes cluster, injecting Kubernetes secrets into the env variables is the simplest solution. Its role here is to provide secrets to containers running on the Kubernetes cluster.

Doppler secrets

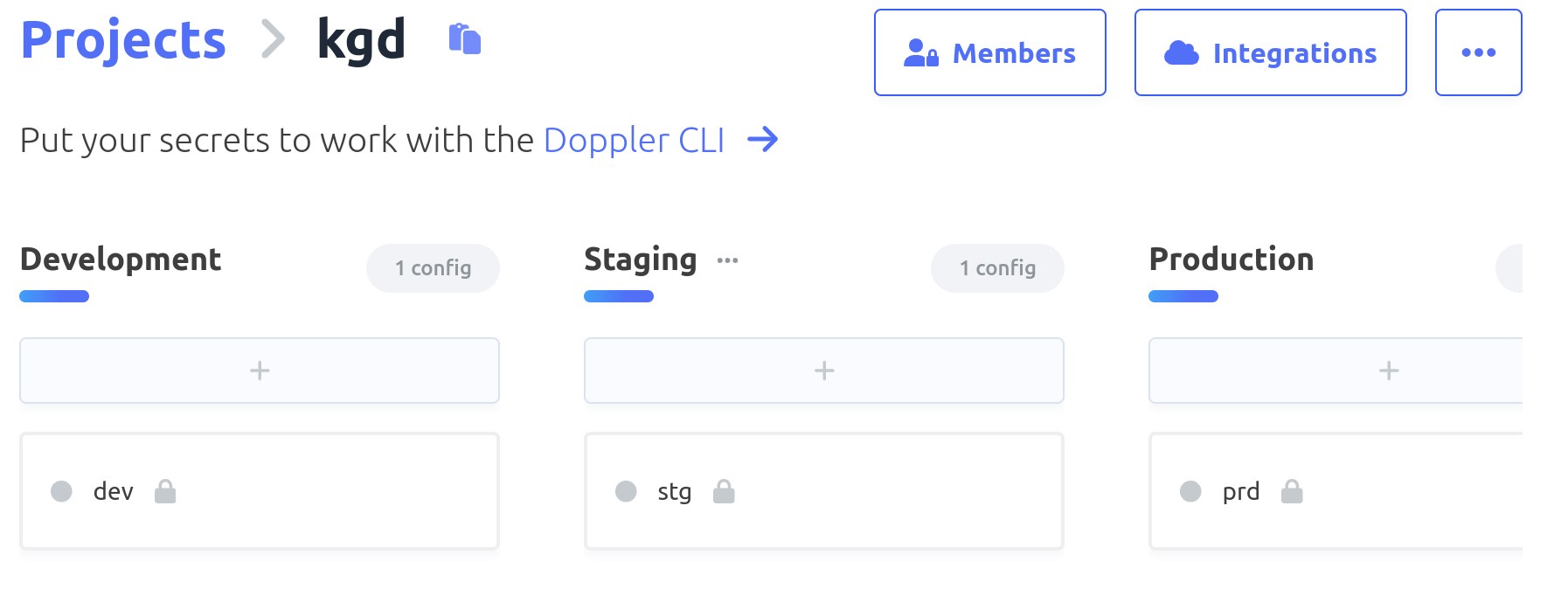

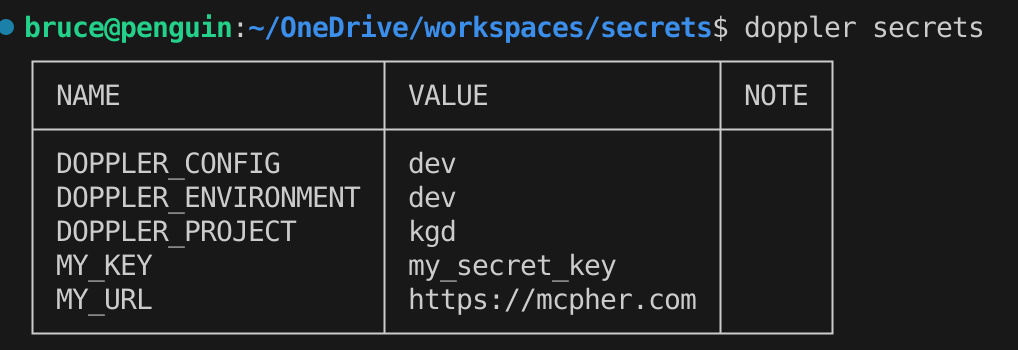

These are my source of truth. Doppler secrets are setup on per project, and within project by config – eg dev, production and staging etc.

Getting started with Doppler

Visit doppler.com and register. It’s free up to a point. Create a project and it’ll automatically setup 3 configs for you.

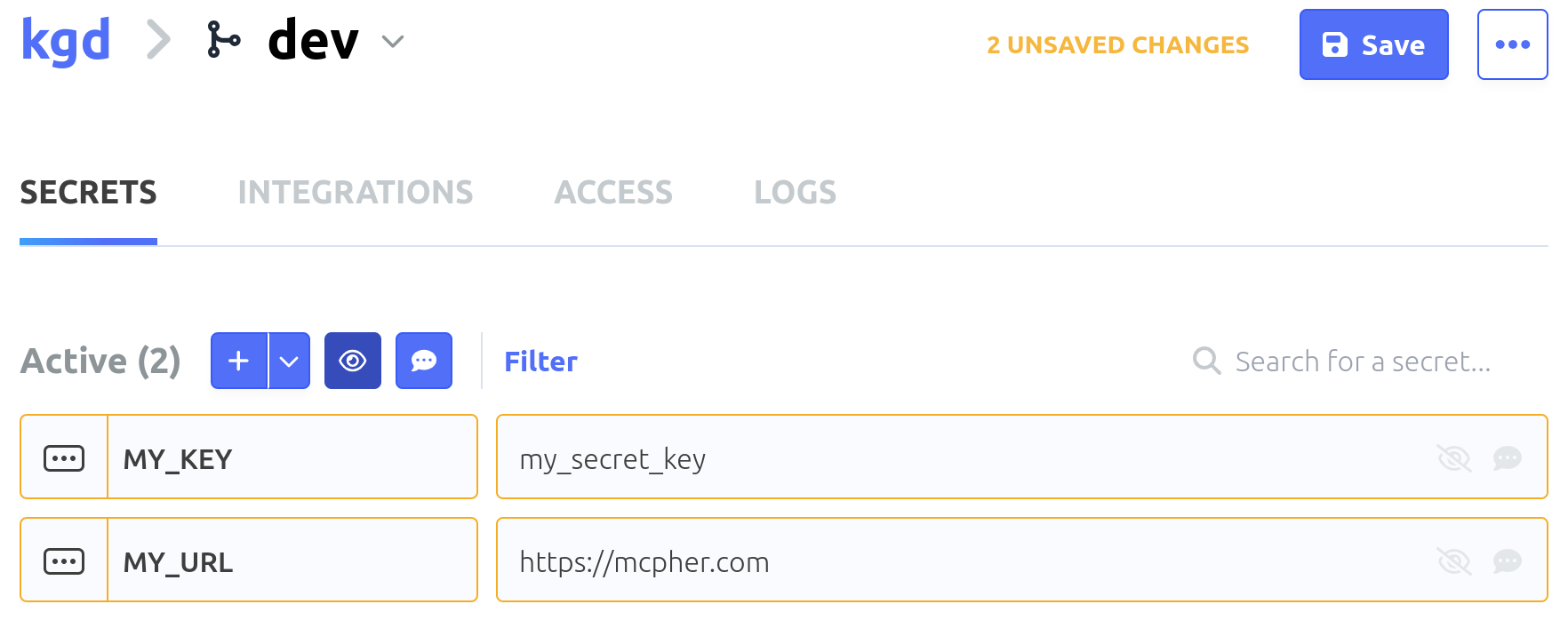

Let’s add a couple of secrets to the dev config – dont forget to SAVE when done.

Doppler cli

Next we’ll install the Doppler CLI so we can get at these secrets. You’ll need to pick the installation method that suits your mac/linux/windows environment. It works on them all.

Login to doppler

This is actually pretty slick. The login creates a browser login and dialog and copies an authorization code into the paste buffer which you can then paste into to browser dialog.

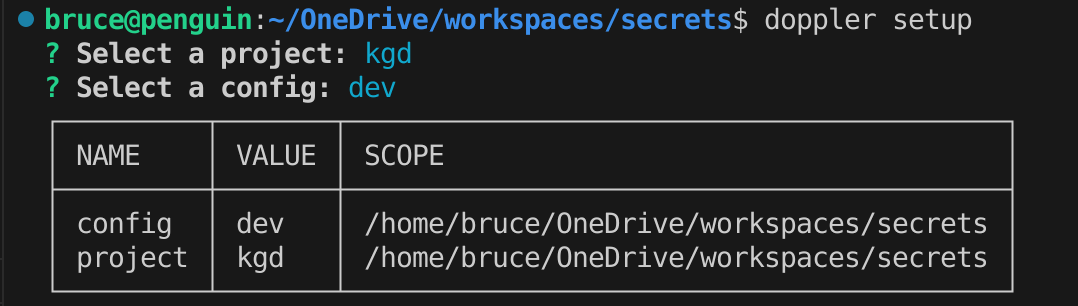

Setup doppler locally

This will allow you to pick a config and project and will install a token so you can access the secrets from the cli.

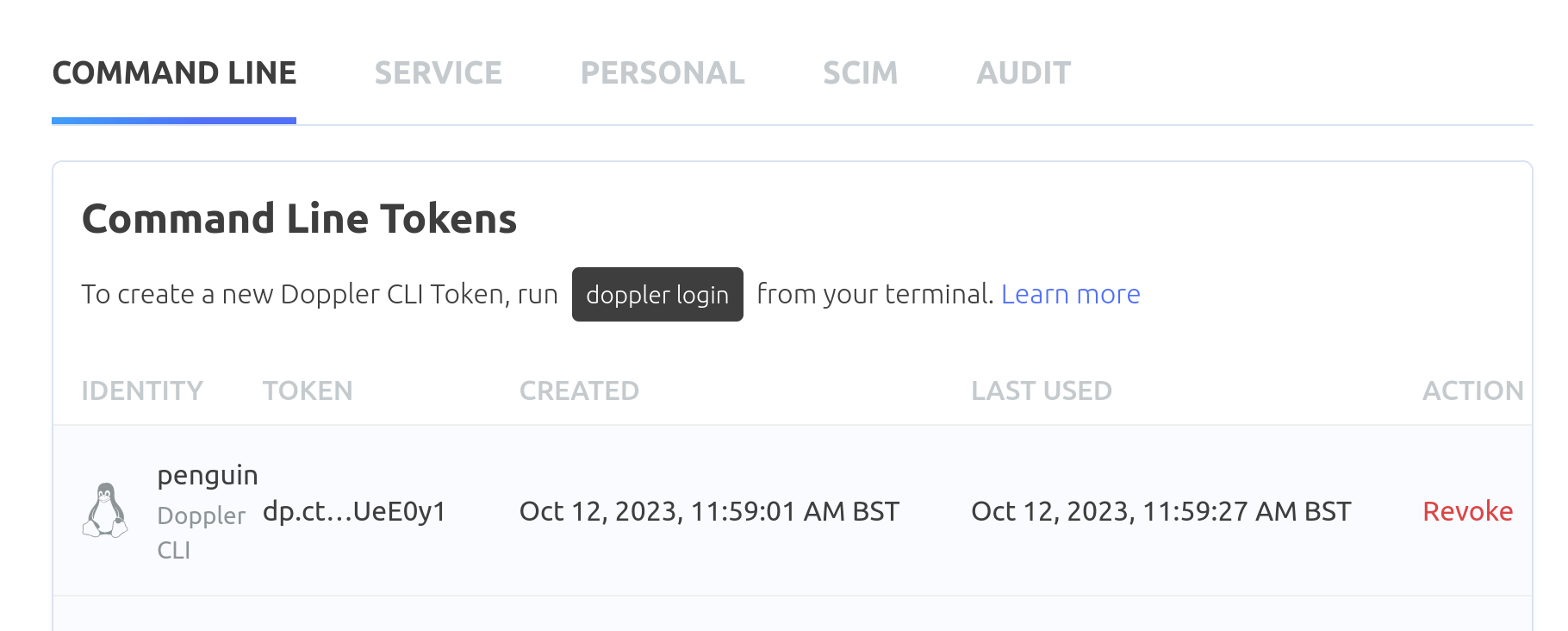

These are known as ‘command line tokens’. There are other types of tokens, some of which I’ll cover later.

You should now be able to access those secrets locally.

GCP secrets

To minimize the visibility of secret (and config) info, I have just one file locally which looks like this. This could be expanded for different configs, but let’s keep it to a single config for now. The project is my GCP project id, and the configSecret is a GCP secret containing anything required for local scripts – for example the region, or if you are doing ci/cd as I am, trigger names, artifact details etc. In other words, everything to do with GCP that I’ll need to access locally in a script.

GCP Secret manager console

You can create a GCP secret with a name matching the gcp.json configSecret property in the cloud console secret manager containing things like the below – anything you need to be able to build your project. Note that at this point I’m not referencing any of the secrets held in Doppler, but I do have a doppler service token. Earlier in the section on command line tokens, I mentioned other types of tokens. This service token can be created there and is the doppler equivalent of a Google service account. This allows me to delegate access to my doppler secrets – in my case I want cloud build to be able to access them on my behalf, so I can use these as build substitutions in my cloudbuild file.

Using GCP secrets in scripts.

First you’ll need to install jq, which is a super handy linux utility to extract values of properties from a json file from within a bash script.

Here’s an example script using the secrets above to create an artifact registry. The name of the GCP secret is picked up from the local gcp.json, and the other required values come from the value of the GCP secret.

Creating a build trigger

Here’s another example, this time creating a build trigger.

Doppler GCP integrations

Back in the section on the doppler config dashboard, you’ll see a tab called integrations. It’s possible to create a synched relationship between GCP secrets and Doppler. In other words, the doppler secrets are copied over to a matching gcp secret when updated. This is certainly another option, but I found it a little fiddly with service accounts and IAM changes required, and in any case I like the idea of keeping build secrets separate from run secrets, while providing a way to access both.

Kubernetes secrets

Some of these secrets are really configuration items rather than secrets, but I have just lumped them all together as secrets – you could split into configmaps and secrets if you wanted to differentiate visibility. One of the great things about Kubernetes is how easy it is to inject secrets (and configmaps) into your app environment. Now we’ll see how to create Kubernetes secrets in a similar way to the scripts used for building.

Bash versions

If you are using a Mac, because of licensing, the Mac is shipped with an old version of Bash that can’t run these scripts, so you’ll need to ensure that you pick up a later version.

If you have a Mac with this problem, you can install a later version with

Create kubernetes secret

This will work on both minikube and kubernetes, whichever your kubectl context is set to. It’s going to create a kubernetes secret from the doppler secrets, referencing the GCP build secrets to discover what to call and where to put everything. This can be part of the kubernetes deployment process, as we are setting desired state and changes will only happen if there’s been a change back in doppler.

Checking the kube secret

You can check if it’s been created correctly by selecting a secret to see its value

Using the kube secret

Finally, we can inject these Kubernetes secrets from doppler as env variables into whichever deployment specs need them.

Local injection of doppler secrets

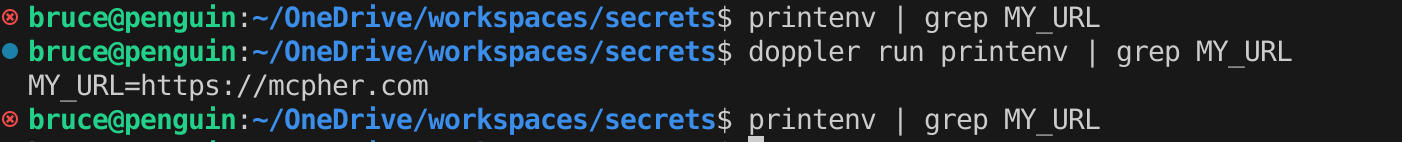

You’ll still want access to the doppler secrets locally, and you can see there are a number of ways to achieve this in the example scripts above. However, Doppler also allows injection of values into env variables locally.

You could even use it to directly subsitute from the shell – for example

However, lets focus on env injection.

Doppler run <command>

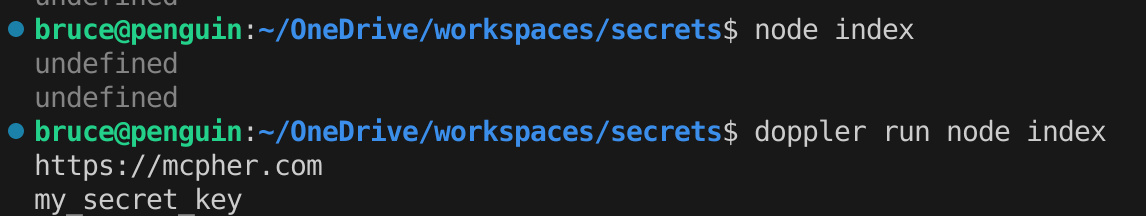

The Doppler run command injects all the secrets it knows about into the env, then runs the command. Let’s test it like this:

This means of course than we can access those same values in a node app. Let’s try this

Summary

These 3 secret manager solutions combined to fit your environment make a very flexible secret management solution, with not a single .env file or local copies of secrets necessary.

Of course this won’t be for everybody, but who want more fine grained access, but it’s just fine for small teams, who will likely benefit from the free tier in each of these solutions.