This is a part of the series of posts on Getting memcache up and running on Kubernetes which explained how to create your first cluster and Installing memcache with Kubernetes which installed some memcache instances on your cluster and Exposing a memcache loadbalancer which makes it available externally. You’ll also have created a test app in your favorite node environment, and tested the memcache integration as described in Creating a test app for memcache on Kubernetes. Now the fun starts- running it up in the same Kubernetes cluster as the memcached pods.

Preparing the environment

We need to create a Docker image next so that Kubernetes can host it. You could do this on whatever development environment you are using, but often they themselves are running in a container and don’t support Docker (Cloud 9 for example). So for this next bit, I suggest you prepare your image using the cloud shell. Here are the steps

- Push your source code to github (mine is here). Google has it’s own private code repository if you prefer to use that, but I’m sticking with github.

- Create a Dockerfile describing the image you want to create

- If you’ve followed the same pattern as I used in Creating a test app for memcache on Kubernetes you will have some secrets you don’t want to push to github in your .gitignore file. Kubernetes provides a neat way to look after secrets, but for now, let’s instead just copy your secrets file to the directory you’ve prepared to build your image in the cloud shell, and which you’ve initialized a target directory as a git repo with git init.

- Build the image and push it to the Google container registry

- Create a deployment and service for your app

- Expose a load balancer to get to it. In real life, you’d want to make an ingress controller, and implement SSL (as described in HTTPS ingress for Kubernetes service) but for this demo, we’ll just expose a non-ssl loadbalancer to keep on point.

Google cloud build was released last month, and it is precisely for automating the business of deployment. I’ll cover Cloud build in a later post, but for now, we’ll just go through the steps (semi) manually.

Make docker image

Here’s a simple shell script to pull your latest source, make the image and push it to the container registry.

- Go to the repo directory and pull the latest source

- build a new image locally

- tag it

- push it to the Google container registry

HERE=$PWD echo "building new image $HERE" echo "pulling latest source" cd mcdemo git pull git@github.com:brucemcpherson/mcdemo.git cp -r * $HERE cd $HERE echo "building new image" docker build -t brucemcpherson/mcdemo . echo "pushing to registry" docker tag brucemcpherson/mcdemo gcr.io/fid-prod/mcdemo gcloud docker -- push gcr.io/fid-prod/mcdemo

The Dockerfile

You’ll find this in my git repo.

- Uses Google Nodejs image as a base

- Copies the apps source code

- Copies the local version of the secrets file

- Builds in the Node dependencies

- Sets a couple of environment variables to set the apps behavior

- Runs the App

#googles node image FROM launcher.gcr.io/google/nodejs MAINTAINER Bruce Mcpherson <bruce@mcpher.com> #create a workdirectory WORKDIR /usr/src/mcdemo ## copy the source COPY index.js . COPY src . COPY package.json . # secrets file needs to be resident locally COPY private/* . #install the dependencies RUN npm install #tell app which port to use ENV PORT 8081 # and the mode ENV MODE ku #how to run it CMD [ "node", "index.js" ]

Create a deployment

Running 2 instances of the App. They’ll be sharing access to the memcached service between them

<br />$ kubectl run mcdemo --replicas=2 --image=gcr.io/fid-prod/mcdemo --port=8081 --labels="run=mcdemo-app,app=mcdemo"</p><p>$ kubectl get deployment<br />NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE<br />mcdemo 2 2 2 2 46m<br />

You should now see 2 new pods in your cluster

NAME READY STATUS RESTARTS AGE mcdemo-566dfddd79-jd2fd 1/1 Running 0 49m mcdemo-566dfddd79-x8bd6 1/1 Running 0 49m mycache-memcached-0 1/1 Running 0 2d mycache-memcached-1 1/1 Running 0 2d mycache-memcached-2 1/1 Running 0 2d

Create a service

We’ll need a service to talk to the app – lets call this server-service.yaml and apply it with kubectl apply -f server-service.yaml

apiVersion: v1

kind: Service

metadata:

name: mcdemo-service

labels:

app: mcdemo-service

spec:

type: ClusterIP

ports:

- port: 8081

targetPort: 8081

selector:

run: mcdemo-app

Expose the service

As previously mentioned, to create a secure version of this service you’d follow the instructions in HTTPS ingress for Kubernetes service, but we’re just going to create a simple loadbalancer, just like we did to expose the memcache service in Exposing a memcache loadbalancer

$ kubectl expose service mcdemo-service --port=8081 --target-port=8081 --name=mcdemo-expose --type=LoadBalancer

After a little while you’ll get an external ip address, and if all has gone well – you can use this to access your app from a browser

$ kubectl get service NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE kubernetes ClusterIP 10.27.240.1 <none> 443/TCP 2d mc LoadBalancer 10.27.244.1 35.xxx.xxx.xxx 11211:30588/TCP 2d mcdemo-expose LoadBalancer 10.27.243.143 35.xxx.xxx.xxx 8081:31143/TCP 50m mcdemo-service ClusterIP 10.27.250.152 <none> 8081/TCP 53m mycache-memcached ClusterIP None <none> 11211/TCP 2d

Does it work?

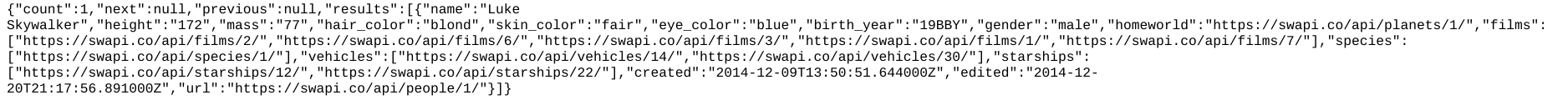

searchinghttp://35.xxx.xxx.xxx:8081/starwars/people?search=luke

time without cache950 ‘ms to complete’timing with cache<hit: http://swapi.co/api/people?search=luke 25960109ae793d5f3e351a5fb946726963a35f7c1 ‘ms to complete’timing with cache (after stopping and starting the app)hit: http://swapi.co/api/people?search=luke 25960109ae793d5f3e351a5fb946726963a35f7c25 ‘ms to complete’So in principle – it works. But when we go back to our regular Node app, we find that cache written inside Kubernetes isn’t necessarily seen by Node and visa versa. To understand why this is, we have to look at how memcached works.

- each memcached server is independent. It is distributed and doesn’t know anything about the others

- the same key is guaranteed to hit the same server, which is why a distributed version can work under normal circumstances

- different clients using the same key don’t necessarily hit the same server

Next steps

This means we have to do a little more work, and introduce a memcached router – mcrouter. Using mcrouter with memcached on Kubernetes