I recommend that you save your commands in various scripts so you can repeat them or modify them later.

Deploying the demo app

A Kubernetes deployment is kind of a “wish list”. It states that you’d like an app to be run, with various parameteres and instances created, but not how or where to do it. Kubernetes takes care of this by creating and managing “pods” in which the app runs (actually multiple apps can run in a pod, but our example only has one). The principle of this approach is that failures can be recovered from and automatically run on the best available node on your Kubernetes cluster without your involvement.

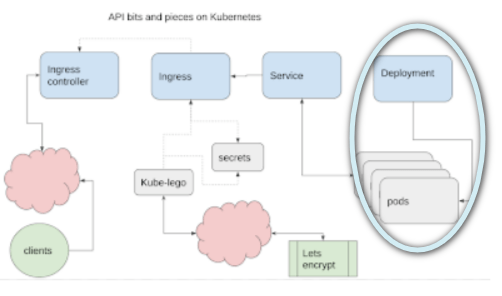

The deployment covers this part of the process.

Namespaces

In Kubernetes, you can keep subjects delineated by assigning them to namespaces. This example will stick to the default namespace, so you can see how deployments interact with other resources already running on the same cluster.

Deploying

This references the image created in Building your App ready for Kubernetes deployment which has a copy in the Google Container Registry.

- replicas=2 means run 2 instances of it. Kubernetes will create a pod for each one, and decide where and how to run it.

- labels=”run=playback-app” will allow this deployment to be found and referenced by a service in a later step.

deploy-server.sh

# deploy the server back end kubectl run playback --replicas=2 --image=gcr.io/fid-sql/playback --port=8080 --labels="run=playback-app"

Check the deployment

kubectl get deploy NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE playback 2 2 2 2 8m

Kubernetes spins up 2 pods for you, 1 for each replica

kubectl get pods playback-cb6dd9dfb-l2qrq 1/1 Running 0 4m playback-cb6dd9dfb-tmmrn 1/1 Running 0 4m

Check the logs, using the pod names

kubectl logs playback-cb6dd9dfb-l2qrq > playback@1.0.0 start /usr/src/kube > node index.js v4 listening on:0.0.0.0:8080

Next step

The app is deployed, it’s running – if you delete a pod, Kubernetes will start another – this is how it “self heals”. However it’s running internally on the cluster for now – it’s not possible to talk to the app yet. The next step is to create a Service to communicate with the pods. See Getting an API running in Kubernetes for how.