There are a few articles on this site about getting more into (and out of) cache and property services. Now my cUseful library contains the ability to create caching plugins that take advantage of some of those techniques, but using a variety of backend stores.

Some of the limitations of the cache and property store are –

- Sharing across projects is not supported (but can be hacked around with the use of libraries)

- Limited payload sizes, and cache lifetimes

- Property service rate limiting

- Key inflexibility

- Data limited to strings

This technique helps to get round that by

- Using plugins to support different kinds of cache stores/databases/files – any kind of store – share data across projects

- Automatic compression, and spreading data across multiple cached items in stores that have a small data size

- Built in exponential backoff

- Keys can be automatically constructed from objects.

- Supporting blobs in property stores

You’ll need the cUseful library

1EbLSESpiGkI3PYmJqWh3-rmLkYKAtCNPi1L2YCtMgo2Ut8xMThfJ41Ex

Built in plug-ins

The CacheService and PropertyService are supported through plug-ins available from the cUseful library. Here’s how to use each of them. Drive service is also supported and written up here Google Drive as cache

Properties Service

const crusher = new cUseful.CrusherPluginPropertyService().init ({

store:PropertiesService.getScriptProperties()

});

Cache Service

const crusher = new cUseful.CrusherPluginCacheService().init ({

store:CacheService.getUserCache()

});

Methods

3 methods are supported

crusher.put ( key , value [,expiry]); crusher.get ( key ); crusher.remove (key);

key

This can be of any type, or even an object, for example

crusher.put ( "monday" , value);

crusher.put ( {url:"https://example.com",header:{Authorization:"Bearer ytxxx"}} , value);

Value

This can be of any type, for example

crusher.put ("name" , "bruce");

crusher.put ("apiresult", {data:"xyz"}, 20);

crusher.put ("today", new Date());

crusher.put ("myimage",file.getBlob());

Getting the item will reconstitute to its original form. A missing item will return null

const str = crusher.get ("name");

const obj = crusher.get ("apiresult");

const dat = crusher.get ("today");

const blob = crusher.get ("myimage");

An item can be removed like this, irrespective of type.

crusher.remove (“name”);

How does it work?

The main techniques are:

- If data is over a certain size, then it will be automatically compressed and uncompressed when retrieved

- If data is still too large for a given store’s limits (which you can set), then it will create a series of linked items, which are reconstituted when retrieved.

- Objects are stringified and re-parsed automatically when detected

- Dates are converted to timestamps, then back again when retrieved

- Blobs are converted to base64, preserving their content type and name, and reconverted when retrieved

- The store is abstracted from the crusher, so the methods are exactly the same, irrespective of which underlying store is being used.

Options

These examples for the built in property stores and cache stores show some initialization options.

Minimal

You need to at least pass a store to use

Cache store

const crusherCache = new cUseful.CrusherPluginCacheService().init ({

store:CacheService.getUserCache()

});

Property store

const crusherProperty = new cUseful.CrusherPluginPropertyService().init ({

store:PropertiesService.getScriptProperties()

});

You can set a few other options to affect the behavior, although there’s probably not much call for these in normal usage (other than plug-in testing). This example sets small chunk sizes (which would provoke spreading the data over many entries) and a very small compression threshold (normally compression will actually increase the size of anything under about 200 bytes). By default anything under 250 bytes is not compressed.

const crusherCacheChunkZip = new cUseful.CrusherPluginCacheService().init ({

store:CacheService.getUserCache(),

chunkSize:22,

compressMin:8

});

Plug-ins

You can write your own plug-ins to support other stores such as databases, files .. even spreadsheets. Essentially – anything that can be used as a key/value store.

As a simple extension, here’s how to use Google Cloud storage as a store. It used my GcsStore overview – Google Cloud Storage and Apps Script library, which has exactly the same methods available as used in the Cache Service – so it means we can simply re-use the CacheService plugin. The benefits of Cloud storage over Apps Script services are

- Much bigger items can be written in a single file

- The lifetime of items can be short (as a cache) or permanent (as in property store), or anywhere in between

- You can share a data across projects, or even outside of apps script

- You can organize the data into folders to create any kind of scope you want (as opposed to just user,script or document) like in Apps Script services.

First we need a little set up, as OAuth2 is required.

You’ll need the

GcsStore overview – Google Cloud Storage and Apps Script library

1w0dgijlIMA_o5p63ajzcaa_LJeUMYnrrSgfOzLKHesKZJqDCzw36qorl

and

OAuth2 for Apps Script in a few lines of code

1v_l4xN3ICa0lAW315NQEzAHPSoNiFdWHsMEwj2qA5t9cgZ5VWci2Qxv2

Goa setup

-

- Go to the cloud console project hosting the storage bucket you’ll use for this purpose (create it if necessary), generate a service account with the storage admin role, and download the JSON credential file to Drive.

- Create a one off function that looks like this, substituting the fileid of the file you just downloaded.

function oneOffgcs() {

// used by all using this script

var propertyStore = PropertiesService.getScriptProperties();

// DriveApp.createFile(blob)

// service account for cloud download

cGoa.GoaApp.setPackage (propertyStore ,

cGoa.GoaApp.createServiceAccount (DriveApp , {

packageName: 'gcs_cache',

fileId:'.........fileid.........',

scopes : cGoa.GoaApp.scopesGoogleExpand (['devstorage.full_control']),

service:'google_service'

}));

}

- Run it. You can delete that now if you want – it’s no longer needed.

GcsStore setup

As previously mentioned, we can use the exact same plug-in for gcsstore as the one for Apps Script cacheservice, but the gcsstore needs a little setup so it knows where to write stuff to and how to do it. Modify the below with your bucket name, and folderName (which can be used as a ‘visibility scope’ for your store, and (if required) a default expiry.

// use goa as a token service

const goa = cGoa.make ('gcs_cache',PropertiesService.getScriptProperties());

//set up a store that uses google cloud storage

const store = new cGcsStore.GcsStore()

// make sure that goa is using an account with enough privilege to write to the bucket

.setAccessToken(goa.getToken())

// set this to the bucket you are using as a property store

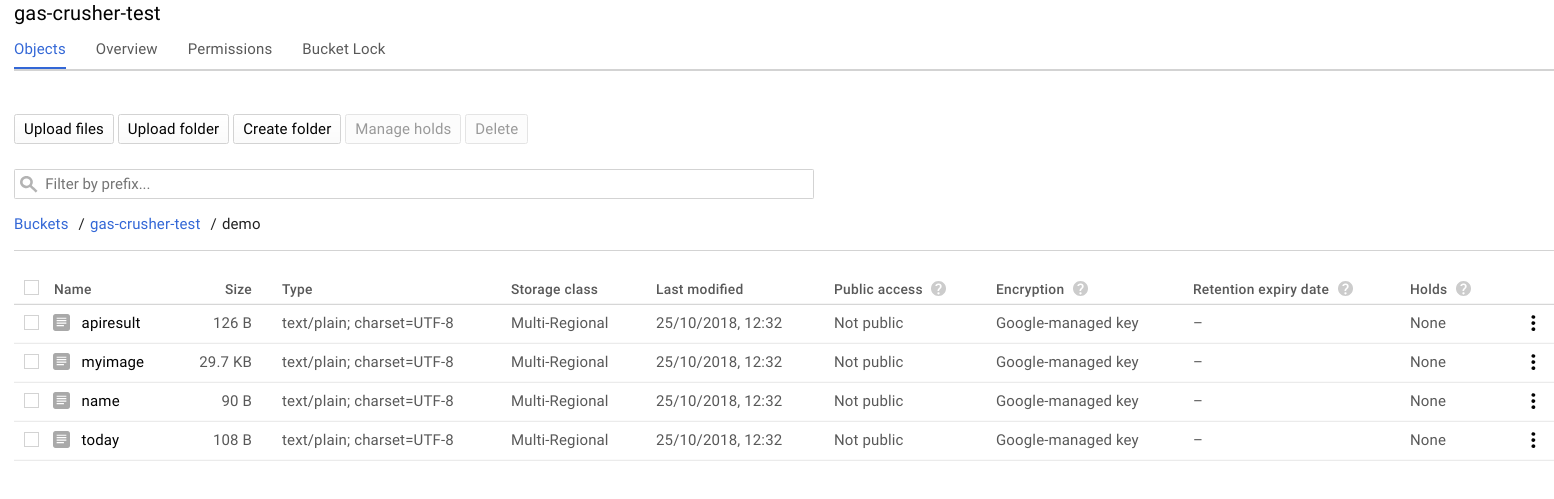

.setBucket('gas-crusher-test')

// gcsstore maintains expiry time data to not return objects if the expire

// this avoids complaining about objects in the store that don't have such data

// this allows you to use this to write

.setExpiryLog (false)

// you can use this to segregate data for different projects/users/scopes etc as you want

.setFolderKey ('demo')

// no need to compress as crusher will take care of that - no point in zipping again

.setDefaultCompress (false)

// you can set a default expiry time in seconds

// note that the item only gets actually deleted from gcsstore if you have lifetime set to some value

// Whether or not you have set lifetime, the API only returns items that have not expired

.setDefaultExpiry (6000);

That’s it – your store is ready to be passed over to the CacheService plug in like this.

// the plugin for gcsstore is exactly the same as the cacheservice plugin, so we don't

// even need a special plugin

const crusherGcs = new cUseful.CrusherPluginCacheService().init ({

store:store,

// but we can allow the objects to be much bigger with cloud store

chunkSize:1000000,

// all items are written with a default prefix for the key to avoid clashes with other items in the store

// you can set it here - since I'm using a dedicated bucket, may as well get rid of the prefix

prefix: ""

});

All the previous examples will work, except they’ll now write to cloud store instead of the cache service.

const file = DriveApp.getFileById('0B92ExLh4POiZOURvcFRBUnVnZjA');

crusherGcs.put ("name" , "bruce");

crusherGcs.put ("apiresult", {data:"xyz"}, 20);

crusherGcs.put ("today", new Date());

crusherGcs.put ("myimage",file.getBlob());

Since the cloud store is permanent, you can go there and see what’s been written using the storage browser.

Setting lifetimes with gcsstore

The cloud storage items contain expiry information in their metada, according to how you have written them. This means that if you try to get an item that has expired it will return null (even though it may still be present in the store).

This is because cloud storage is meant to be permanent. However you can set lifecycle management for the bucket, which means that items will last for a given number of days, then be automatically deleted.

If you are planning to use your storage bucket only for temporary data, then gcsstore supports managing this for you. Note though that it applies to the entire bucket, not just items written by gcsstore. When you create the store, add this to turn this on.

// if you are using gcs for temporary data, you can set lifecycle management on for the bucket // this will clean up expired items after a day. // ** CAUTION ** this applies to all data in the bucket (not just in a given folder), so ONLY use // if the bucket's only purpose is for temporary cache data .setLifetime (1)

Plug-in skeleton

These are rather simple to create, with very little customization required between platforms. Here’s the plug in for the property service. If you create one you’d like to share, let me know and I’ll incorporate it into the library.

function CrusherPluginPropertyService () {

// writing a plugin for the Squeeze service is pretty straighforward.

// you need to provide an init function which sets up how to init/write/read/remove objects from the store

// this example is for the Apps Script cache service

const self = this;

// these will be specific to your plugin

var settings_;

// standard function to check store is present and of the correct type

function checkStore () {

if (!settings_.store) throw "You must provide a cache service to use";

if (!settings_.chunkSize) throw "You must provide the maximum chunksize supported";

return self;

}

// start plugin by passing settings yiou'll need for operations

/**

* @param {object} settings these will vary according to the type of store

*/

self.init = function (settings) {

settings_ = settings || {};

// set default chunkzise for cacheservice

settings_.chunkSize = settings_.chunkSize || 9000;

// respect digest can reduce the number of chunks read, but may return stale

settings_.respectDigest = cUseful.Utils.isUndefined (settings_.respectDigest) ? false : settings_.respectDigest;

// must have a cache service and a chunksize

checkStore();

// now initialize the squeezer

self.squeezer = new cUseful.Squeeze.Chunking ()

.setStore (settings_.store)

.setChunkSize(settings_.chunkSize)

.funcWriteToStore(write)

.funcReadFromStore(read)

.funcRemoveObject(remove)

.setRespectDigest (settings_.respectDigest)

.setCompressMin (settings_.compressMin);

// export the verbs

self.put = self.squeezer.setBigProperty;

self.get = self.squeezer.getBigProperty;

self.remove = self.squeezer.removeBigProperty;

return self;

};

// return your own settings

function getSettings () {

return settings_;

}

/**

* remove an item

* @param {string} key the key to remove

* @return {object} whatever you like

*/

function remove (store, key) {

checkStore();

return cUseful.Utils.expBackoff(function () {

return store.deleteProperty (key);

});

}

/**

* write an item

* @param {object} store whatever you initialized store with

* @param {string} key the key to write

* @param {string} str the string to write

* @return {object} whatever you like

*/

function write (store,key,str) {

checkStore();

return cUseful.Utils.expBackoff(function () {

return store.setProperty (key , str );

});

}

/**

* read an item

* @param {object} store whatever you initialized store with

* @param {string} key the key to write

* @return {object} whatever you like

*/

function read (store,key) {

checkStore();

return cUseful.Utils.expBackoff(function () {

return store.getProperty (key);

});

}

}

For help and more information join our community, follow the blog or follow me on Twitter.